Risk Savvy: How to Make Good Decisions

by Gerd Gigerenzer

Gigerenzer makes a distinction between risk and uncertainty. He uses the term risk “for a world where all alternatives, consequences, and probabilities are known… Most of the time, however, we live in a changing world where some of these are unknown: where we face unknown risks, or uncertainty. The world of uncertainty is huge compared to that of risk… We have to deal with ‘unknown unknowns.’”

Risks can be calculated when the three conditions hold:

- low uncertainty: the world is stable and predictable,

- few alternatives: not too many risk factors have to be estimated, and

- high amount of data available to make these estimations.”

“If risks are known, good decisions require logic and statistical thinking.”

“Under uncertainty, simple heuristics can save effort yet be more accurate at the same time.”

“In an uncertain world, complex decision making methods involving more information and calculation are often worse and can cause damage by invoking unwarranted certainty… Because these calculations generate precise numbers for an uncertain risk, they produce an illusory certainty… Analysis will not reduce uncertainty.”

The author also notes, “intuition is based on complex unconscious weighing of all evidence… Expertise is a form of unconscious intelligence.”

DEFENSIVE DECISION MAKING

“Risk aversion is closely tied to the anxiety of making errors. If you work in middle management of a company, your life probably revolves around the fear of doing something wrong and being blamed for it. Such a climate is not a good one for innovation, because originality requires taking risks and making errors along the way. No risks, no errors, no innovation… That’s not how to nurture great minds.”

“Serendipity, the discovery of something one did not intend to discover, is often a product of error.” This reminds me of the story of how Post-It Notes were developed at 3M.

“I use the term ‘error culture’ for a culture in which one can openly admit to errors to learn from them and to avoid them in the future… A system that makes no errors in not intelligent… Intelligence means going beyond the information given and taking risks.”

Defensive decision making is when “a person or group ranks option A as the best for the situation, but chooses an inferior option B to protect itself in case something goes wrong… Defensive decisions are not a sign of strong leadership and positive error culture.”

NATURAL FREQUENCIES

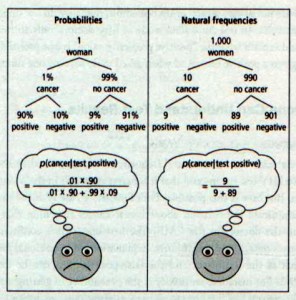

“There is a simple method to improve comprehension: to translate probabilities into natural frequencies… To represent a problem in natural frequencies, you begin with a number of people (here, one thousand women), who are divided into those with and without the condition (breast cancer); these are again broken down into two groups according to the new information (test result). The four numbers at the bottom of the right tree are the four natural frequencies. The four numbers at the bottom of the left tree are conditional probabilities. Unlike conditional probabilities (or relative frequencies), natural frequencies always add up to the number on the top of the tree.”

“In the mid-1990s, psychologist Ulrich Hoffrage and I demonstrated for the first time that the problem is not simply inside people’s minds, but in the way information is communicated: When the same problems are given in natural frequencies, people more readily understand them…Schools in several countries now include natural frequency trees in the textbooks, helping children to understand how to think about risk.”

Chapter 10 explains how confusion about the meaning of survival rates and mortality rates leads to overdiagnosis and unnecessary surgeries. “An icon box brings transparency to health care… People have a moral right to be informed in a transparent way, but they aren’t.”

REGULATION

“We need to break away from the traditional models and find new tools. As with the Basel regulations, the traditional way is to introduce complex regulatory systems and, if they don’t work, make them more complex. This is the way taken by many governments and organizations. A different vision is to ask: Is there a set of simple rules that can solve this complex problem?”

“The fact is that complex rules have been gamed by banks, and more complexity makes it easier to find loopholes and twist the thousands of estimates. All this has led to unproductive activities such as an adverse complexity race between bankers and regulators. Violations of simple rules are, in contrast, easier to detect.”

I think the author means complicated rules, rather than complex, but otherwise his point is convincing.

GOOD LEADERSHIP

- “First listen, then speak.

- If a person is not honest and trustworthy, the rest doesn’t matter.

- Encourage people to take risks and empower them to make decisions and take ownership…

- When judging a plan, put as much stock in the people as in the plan.”

Gerd Gigerenzer also wrote several other books, including: How to Stay Smart in a Smart World: Why Human Intelligence Still Beats Algorithms (MIT Press, 2022); Gut Feelings: The Intelligence of the Unconscious (2008); Calculated Risks: How to Know When Numbers Deceive You (2003); Reckoning With Risk: Learning to Live With Uncertainty (2003); and he is editor of Heuristics: The Foundations of Adaptive Behavior (2015).

Gigerenzer, Gerd. Risk Savvy: How To Make Good Decisions. Penguin, 2014. Buy from Amazon.com

Disclosure: As an Amazon Associate I earn from qualifying purchases.

Discover more from The Key Point

Subscribe to get the latest posts sent to your email.